- Copilot Studio allows multilingual agents, but the primary language is set when creating the agent and only its region can be changed.

- Secondary languages require downloading, translating, and re-uploading localization files, keeping all strings synchronized.

- Voice in Copilot integrates recognition, wait times, interruption, SSML and call transfer, with extensive language options.

- Microsoft 365 Copilot supports fewer languages than the interface, so sometimes it requires rephrasing requests in a supported language.

If you've started using Copilot and are wondering How to change the Copilot voice language Or to make it understand and respond in different languages, you're not alone. Between Copilot Studio, Microsoft 365 Copilot, voice features in Dynamics 365 Contact Center, and guides on Using Hey Copilot and voice in Windows 11There are many options… and also quite a few nuances that are worth knowing.

Below you will find a very complete guide, in Spanish from Spain and with clear language, to understand What you can and can't do with languages, voice, and region in CopilotFrom setting up multilingual agents in Copilot Studio to learning which languages Microsoft 365 Copilot supports, how interactive voice works, and what limitations you'll encounter on a daily basis.

Languages and primary language region in Copilot Studio

Copilot Studio allows you to create agents in many different languagesThis allows you to reach users in multiple countries without duplicating the entire project. However, there's one key rule you must remember: The agent's primary language is only chosen when creating the agent and cannot be changed afterward..

What you can do, if the language allows it, is change the region associated with that primary languageFor example, if your agent is in Spanish, you could switch from the Spain variant to another compatible region, provided it is available in the region list for that language.

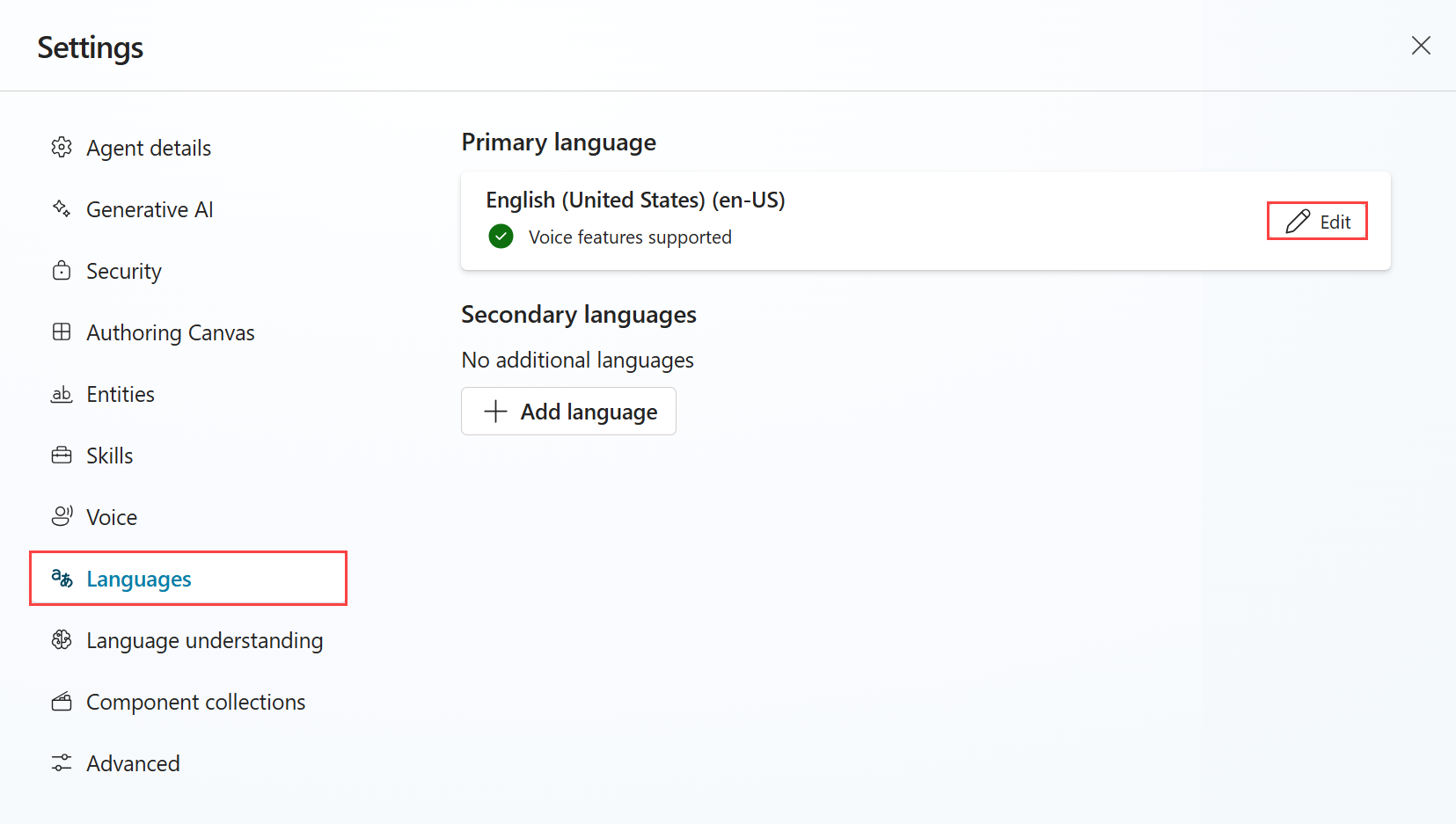

The region change is done from the agent's own settings: Go to Settings, then Languages, edit the primary language, and choose the region you want.This is useful if you need to adjust regional nuances (such as spelling, date format, or custom expressions) without rebuilding the agent from scratch.

Once the agent is created, Copilot Studio will generate base content in the target languageSystem themes and predefined custom themes on the Themes page, all adapted to that primary language.

Copilot Studio as a multilingual platform

The great strength of Copilot Studio is that it allows create multilingual agents that automatically detect the user's language (based on browser or client settings) and respond in that same language when supported.

When designing the agent, you have to choose a first languagewhich is the language in which you will do all the editing of themes and content. That primary language acts as the base: Everything is designed there and then localized into the other languages.

Then you can add secondary languagesFrom that moment on, it's you (or your location team) who's in charge. translate the messages of the topics you createIn agents that use generative orchestration, Copilot automatically translates the generated messages, but you still manage the rest of the content.

When a user logs in with a published agent, the system Try adjusting the agent language to the browser or client language.If that language is not supported, or nothing is detected, the agent returns to its primary language as an alternative.

In addition, you can design your agent so that I changed the language mid-conversationeither explicitly (through logic in a theme) or automatically following the language that the user uses on each turn.

How to add secondary languages to an agent

Adding new languages to a Copilot Studio agent is straightforward from an interface standpoint, although the localization process does require more attention. The basic workflow for Add secondary languages is:

First you go to the page of Agent settings and select LanguagesFrom there, you choose the option to add a new language, review the list, and mark the ones you want to incorporate as secondary languages for the agent.

In the language registration panel, Select one or more languages and press Add.Once added, return to the list, verify that everything is correct, and close the settings page. From that moment on, those languages are part of the agent's repertoire.

Keep in mind that, after adding them, You will have to manage the translations (download strings, translate them and re-upload them) so that the agent does not leave parts unlocalized and strange mixtures between the main and secondary languages are not seen.

Localization management in multilingual agents

In Copilot Studio, all theme creation and editing tasks are performed always in the agent's primary languageTo translate them into other languages, a localization flow based on JSON or ResX files is used.

When you first download the localization file for a secondary language, All strings appear in the primary language.That file is the one you should put through your usual translation process, whether with human translators, computer-assisted translation tools, or the systems you use in your organization.

The standard process for preparing localized content is usually:

You go to the page Agent settings, Languages sectionThen, in the list of secondary languages, select the option to load/update. From the Update Localization panel, choose the format (JSON or ResX), download the current localization file, and open it in your editor.

Then you replace the strings in main language by their translationkeeping the identifiers intact. When you're finished, return to the panel, upload the translated file, and close the configuration. Your agent will then have the content ready to respond in that secondary language.

Localized content update and string synchronization

When you modify the content in the main language (add new messages or change texts), Secondary languages do not update automaticallyWhenever there are changes to the base strings, you will need to repeat the process of downloading, translating, and uploading the localization files to keep everything up to date.

In practice, when you download the localization file for a secondary language again, you will see that The new strings still appear in the main language., while those that were already translated remain as you left them.

The important point is that, if you have changed the original text of some string In the primary language, the corresponding translated string will still display the old version because the identifiers don't change. This can produce a desynchronization between what is seen in the primary language and in the secondary languages.

That's why it's good practice to compare the new location file that you uploaded last time To detect which strings in the main language have changed and thus know what needs to be retranslated. If you don't take care of this, you'll end up with agents where Spanish and French are separate, and the user experience suffers.

Localizing dynamic content in Adaptive Cards

One more technical point, but very relevant if you use rich interfaces, is that The localization files do not include mixed strings of Adaptive Cards when these combine static text and variables.

In order for that dynamic content on the cards to be located, an alternative solution must be applied: create an intermediate text variable that contains the complete string (fixed text + variables) and use only that variable in the adaptive card.

The procedure involves placing a node before the card. Set variable valuewhich you then adjust in the code editor to convert it into a SetTextVariable node. In that node, you define a new variable and fill the value field with the complete text, inserting the variables where appropriate.

Then you update the Adaptive Card so that just refer to that variableWhen you download the localization file, the value of that variable (with static text and variable references) will appear as a localizable string within a setVariable action, and you will then be able to translate it without losing the dynamic parts.

How to test a multilingual agent

To verify that your agent performs well in multiple languages, Copilot Studio includes a test panel Quite flexible. You can select a specific language and see how the bot understands and responds.

From that panel, you open the options menu (the three dots) and Choose the language you wantThe testing panel reloads using that language, while the editing canvas remains loyal to the primary language and, importantly, won't let you save changes to themes until you switch back to that base language.

From there you can write test messages in the selected language and see If the themes are triggered correctly, how do the translations behave? And if the generative orchestration responds as you expect.

Another option is to change the browser language Go to one of the languages the agent supports and visit the demo website. The site will open directly in that language, and the agent will converse in that language. If you also want to use voice commands, see How to use Hey Copilot.

Make the agent change language in a conversation

If you want your agent to be able to change language mid-sessionYou can rely on the system variable User.LanguageThe logic for changing it can exist in any topic, although the recommendation is to do it right after a Question node, to ensure that all subsequent messages are issued in the same language.

The trick is in establishing User.Language with one of the agent's secondary languagesAs soon as you do this, the language the agent "speaks" changes immediately, and subsequent responses are generated in that new language.

This is useful, for example, if in an initial flow you ask the user which language they want to continue in and, depending on their choice, You update the User.Language variableFrom that point on, the entire flow unfolds in that language.

Dynamic language switching based on what the user types

In addition to the explicit change, Copilot Studio offers the possibility of change language dynamically throughout the same conversation, detecting in which language the user writes in each message.

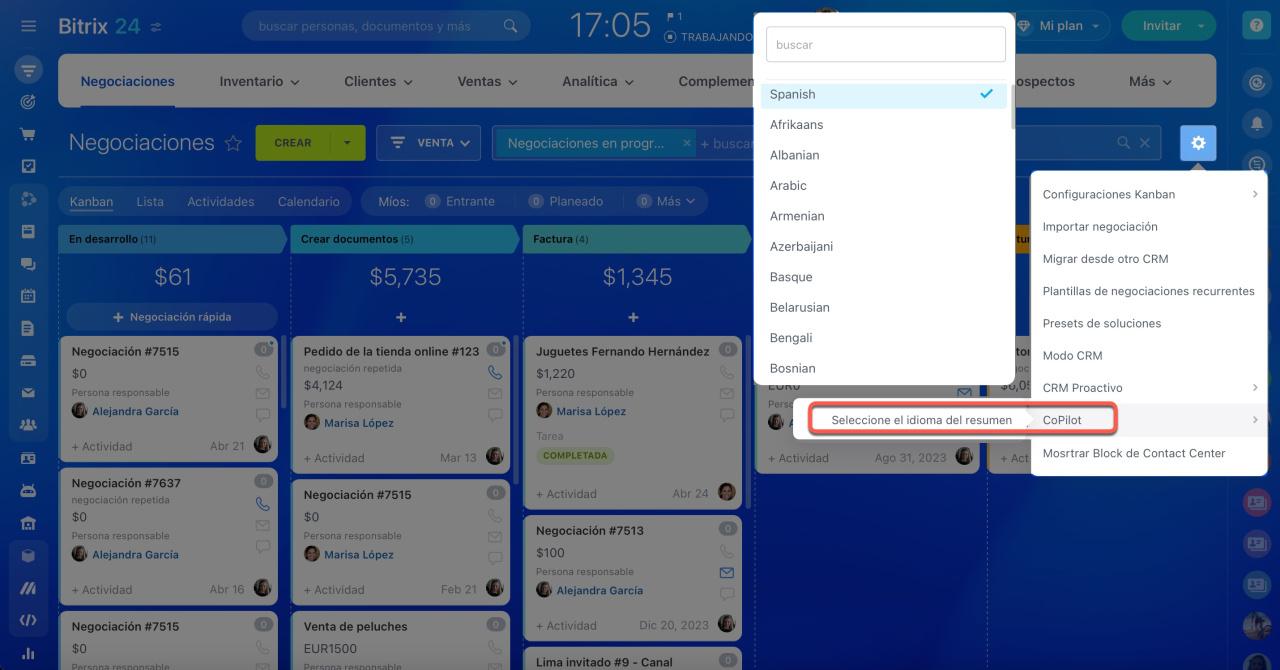

This is usually done using a theme with trigger “A message is received”This acts as a general filter on all incoming messages. Within this theme, a custom request is configured to determine the message language. The result is stored in a variable (for example, DetectedLanguage.structuredOutput.customlanguage).

Next, a condition is created based on that variable and The logic branches according to the detected language.In each branch, a Set Variable Value node is added that updates User.Language with the corresponding language.

In this way, if the user starts typing in Dutch and then switches to English, the agent can automatically switch from Dutch to English as many times as necessary during the session, provided those languages are configured as supported by the agent.

Language priority and restoring the default language

When an agent is configured for dynamic language switching and the variable is set User.LanguageThat preference acts as a persistent override for that userIn other words, the system applies a priority order when deciding which language to respond in:

First, the highest priority is language preference set by the user and that it is saved as an override in its state. Secondly, the language of the incoming message (Activity.Locale) is taken into account as long as it matches one of the supported languages. And, lastly, the agent's primary language as a backup resource.

If you want to "clean" that override after testing the dynamic language switch, you can send the /debug clearstate command to the agentThis clears the persistent language from the user's state, and the agent re-evaluates the language based on the browser or primary language.

Behavior of a multilingual agent with unconfigured languages

If a user configures their browser to a language that You don't have it activated in the agentCopilot doesn't try to juggle: it simply returns to its first languageHence the importance of choosing the right base language, as it will be the wild card for any uncovered situation.

Also remember that, although you can change the primary language region (when there are several variants available, such as different countries), You cannot change the language itself once the agent has been created.If you selected the wrong primary language when starting the project, the solution is to create a new agent.

Missing translations and errors when publishing multilingual agents

Another important behavior: if you add new messages in the first language And if you don't update the translations in the secondary languages, the agent will display those texts. without translation in the main language even when the user is using another language.

This doesn't break the bot, but it does affect the user experience: the user will see parts in their language and parts in the base language, so it's advisable always keep the location files up to date when you introduce changes to the themes.

When publishing multilingual agents, the following error may also appear: “Bot validation error” with code SynonymsNotUniqueThis message indicates that there are duplicate synonyms or a synonym that matches the DisplayName of an item in some localization file.

This usually occurs on a node that contains a Entity.Definition.'closedListItem' where one of the Synonyms elements is not unique or matches the DisplayName. The rule is clear: all synonyms for the same entity must be unique and different from DisplayNameTo fix this, you need to review the JSON or ResX of the secondary language, locate the conflicting entries, and correct them.

Language support in Copilot Studio

Copilot Studio offers language compatibility levels for different features. Not all languages are at the same level or for the same functions. Generally, Microsoft classifies support into three phases: general availability (the most robust), preview, and no support.

For canvas of creation (the interface seen by the agent creator), Copilot Studio uses the browser's language. Supported languages for the interface include, but are not limited to: Arabic, Simplified and Traditional Chinese, Czech, Danish, Dutch, English (United States), Finnish, French (France), German, Greek, Hebrew, Hindi, Indonesian, Italian, Japanese, Korean, Norwegian Bokmål, Polish, Portuguese (Brazil), Russian, Spanish (Spain), Swedish, Thai, and Turkish.

For event triggers (which allow the creation of autonomous agents that react to events without direct user input), currently primarily supports English (United States). In other words, the more "technical" aspects of external automation are currently geared towards that variant of English.

In the generative responses, orchestration, and user languageThe list of languages is even broader: in addition to those already mentioned, it includes different variants of English (Australia, United Kingdom, United States), French (France and Canada), Portuguese (Portugal and Brazil), Spanish from Spain and the United States, and many others. These are the languages in which users can write their questions and receive answers generated by the agent.

La voice compatibility It largely aligns with this list: agents that support interactive voice responses in Arabic, Chinese (Simplified and Traditional), Czech, Danish, Dutch, English (Australia, UK, US), Finnish, French (France and Canada), German, Greek, Hindi, Indonesian, Italian, Japanese, Korean, Norwegian Bokmål, Polish, Portuguese (Brazil and Portugal), Russian, Spanish (Spain and US), Swedish, Thai, and Turkish.

Supported languages and language errors in Microsoft 365 Copilot

Microsoft 365 Copilot doesn't always support the same languages as Copilot Studio, especially when we're talking about language of requests and responsesIt is relatively common for the Teams or Office user interface to be translated into many more languages than Copilot is capable of intelligently processing.

If you use Microsoft 365 Copilot and you see a error indicating that a language is not supportedThis means that the system cannot process the request in that specific language at that moment. You might see this error even if the entire application interface is perfectly translated into your language.

When this happens, the message usually asks you to Please rephrase your application in one of the accepted languages. and try again. In other words, Copilot forces you to "change the voice language" or text language of your request to a supported language if you want to receive a response.

Microsoft expands the list of supported languages as the product evolves, and the official documentation is updated when new languages are added. If you work in an environment where your working language is not yet supported, you will need to combine Copilot with external translation tools at the moment.

Support and community for questions about Copilot

If you encounter language, voice, or configuration problems that are not resolved by the documentation, Microsoft recommends contacting [the relevant department/support organization]. Microsoft Q&A For advanced Copilot issues, although the Answers forums still exist, there are fewer specific resources there for testing and diagnosing complex Copilot cases.

Microsoft Q&A involves a more specialized group of technical staff and other users who They share experiences, configurations, and solutions.This often leads to more refined answers when you get stuck on strange language, voice, or integration behavior.

Copilot Studio and interactive voice with Dynamics 365 Contact Center

When we talk about “Copilot voice language” in a contact center context, the capabilities of interactive voice response (IVR) integrated with Dynamics 365 Contact Center. Here, a voice-enabled agent works differently than a purely chat agent, because it works with both voice and DTMF modes (the tones dialed from the telephone keypad).

A voice agent includes specific system themes for voice scenarios such as silence detection, unrecognized voice, or unknown keystrokes. These themes are automatically added when you enable voice in the agent settings and allow you to handle typical phone call situations.

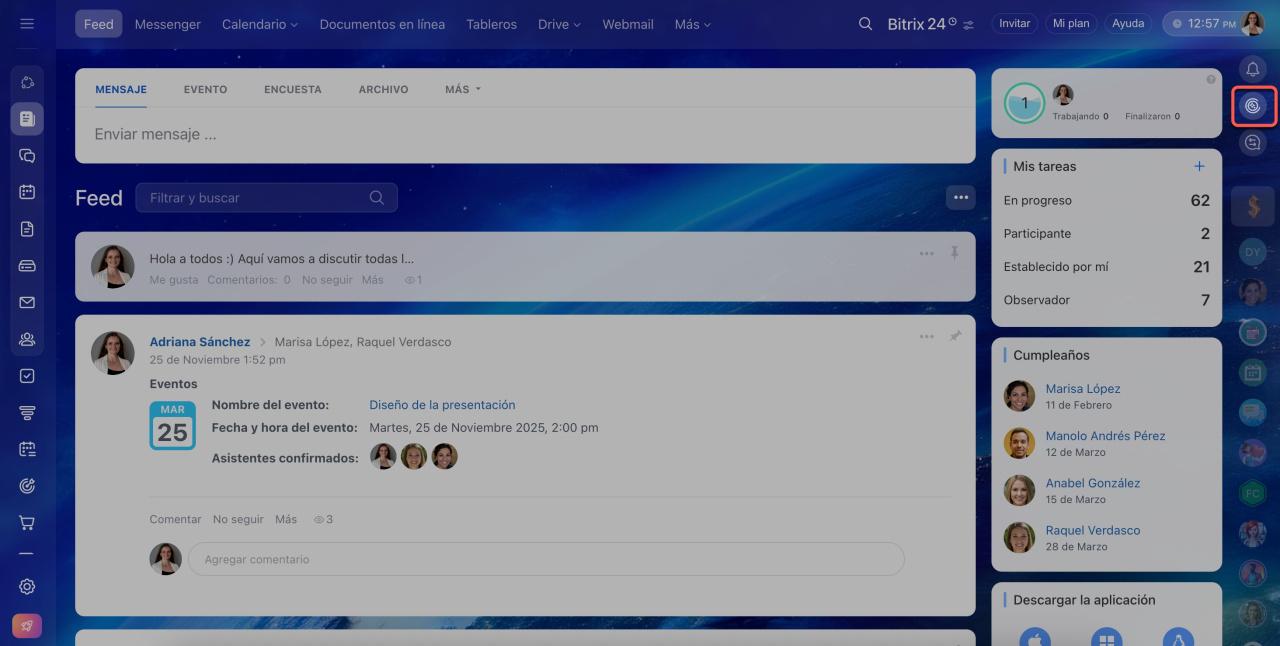

Activating the option Enable voice This is done from Settings > Agent Voice. When doing so, you can also decide whether voice will be the default mode for theme creation and whether you will use a basic approach (classic themes) or real-time voice (generative orchestration).

If at any point you stop using the telephone channel, you can disable voice optimizationThis will revert the default mode to text, remove speech recognition enhancements, and prevent the creation of voice-specific elements, although the telephony channel itself is not removed until you modify it.

Use of the voice as the primary mode of creation

When voice is enabled, you can choose voice and DTMF as the modality for each node of your topics. In addition, there is an agent-level preference to mark voice as the primary creation mode, ensuring that all fields that require it are configured with the appropriate mode.

This also affects the message availability by channelThere are differences between how messages are delivered on purely text channels and on combined text and voice channels. For example, certain messages may be left blank or unavailable in voice format if they are not prepared for that mode.

Customized automatic voice recognition

In specialized domains (healthcare, finance, etc.) it is common for the user to speak using technical terms or jargon that a generic system might not recognize well. To improve that accuracy, Copilot Studio allows you to activate a personalized automatic voice recognition which feeds on the agent's own data.

This setting is enabled from Settings > Agent Voice, by checking the option to Increase accuracy with agent dataAfter saving the changes and publishing the agent, calls should benefit from more refined recognition in that specific context. If you need to configure recognition at the system level, see [link to relevant documentation]. How to enable or disable voice recognition and access in Windows 11.

Global voice settings: timeouts, mute, and latency

Agent-level Voice configuration allows you to set default values for timeout and behavior which can then be replaced at the node level. Among other parameters, you can adjust:

El DTMF digit waiting timeThat is, how many milliseconds can pass between keystrokes when the user enters multiple digits. Also the DTMF completion timeout, which determines how long to wait for the end key before assuming that the input is finished.

La silence detection Define how long the user can remain silent before the agent acts (for example, by repeating the question or triggering a silence theme). Additionally, for voice capture, you can control the expression completion time (pause after speaking) and the total voice recognition time.

Another important area is the latency messages For long-running operations. You can decide how long the agent takes to send a message informing that it is processing something and for how long, at a minimum, that message will be played even if the operation finishes early.

Finally, you have control of voice sensitivityThis feature balances voice detection against background noise. Reducing it is useful in noisy environments or when using hands-free devices; increasing it helps in very quiet contexts or with users who speak very softly. Unlike other controls, this one cannot be overridden at the node level.

Interrupt (barge-in) and when to deactivate it

A highly valued feature in advanced IVR systems is the ability to interrupt the agent while he is speakingCopilot Studio allows you to enable interrupts for both voice and DTMF inputs, making the experience more streamlined when the user already knows the menu options and doesn't need to hear the entire voice prompt.

However, there are times when it makes sense disable the interrupt, such as in the agent's first message (to ensure everyone hears the key information) or in compliance messages that should not be cut off.

The configuration is done node by node: in each Message or Question with voice and DTMF modes, you can enable or disable the interrupt option. The system handles message queues in batches so that, when a queue finishes, the interrupt configuration is reset for the next block.

Detailed settings for mute, repeat, and alternative actions

In addition to global wait times, you can customize the behavior of a specific node Regarding user silence. For each voice question, you can choose between using the global setting, disabling the timeout (waiting indefinitely), or specifying a number of milliseconds before repeating the question.

They can also be defined alternative actions: how many times the same question is repeated, what message is used for those repetitions, and what the agent does after exceeding the maximum number of attempts (for example, transfer to a human agent or end the call).

For voice input specifically, parameters such as the pause time that is considered the end of the speech and the maximum time given to the user once they begin answering. This allows the system to adapt its behavior to calls with more deliberate users or contexts where speed is key.

Latency messages and long operations

In scenarios where the agent triggers back-end operations that take a long time to complete (heavy queries, external integrations, etc.), it is a good idea to configure latency messages that inform the user that the system is still working.

In Copilot Studio, you can have the agent send and repeat a message while a Power Automate flow is still running. In the action node properties, select the option to send MessageYou define the text (even using SSML to adjust voice, pauses, and emphasis) and control how long to wait before repeating and what the minimum playback time is.

Call termination, answering machines, and context variables

In a real IVR, you also need the agent to be able to end the call explicitly when the flow ends. This is configured by adding a conversation termination node to the relevant topic, within the topic management section.

There is also a system issue for answering machine detectionThis feature allows you to detect when a call reaches voicemail and leave a recorded message. In the Message node of this theme, you define the text that plays when the agent detects an answering machine.

To integrate all of this with Dynamics 365 Contact Center, Copilot Studio supports context variables These are passed along with the call when it is transferred to a human agent or other systems. Examples include the user's caller ID, the number that was called, the SIP UUI header, the input type (voice or DTMF), the last key pressed, whether only DTMF is allowed, and speech recognition trust values.

These variables can be used to enrich the human agent's experience that receives the call (showing previous context, data collected during the interaction with the bot, etc.) and to make intelligent routing decisions within the contact center.

Format the voice with SSML and change "how Copilot sounds"

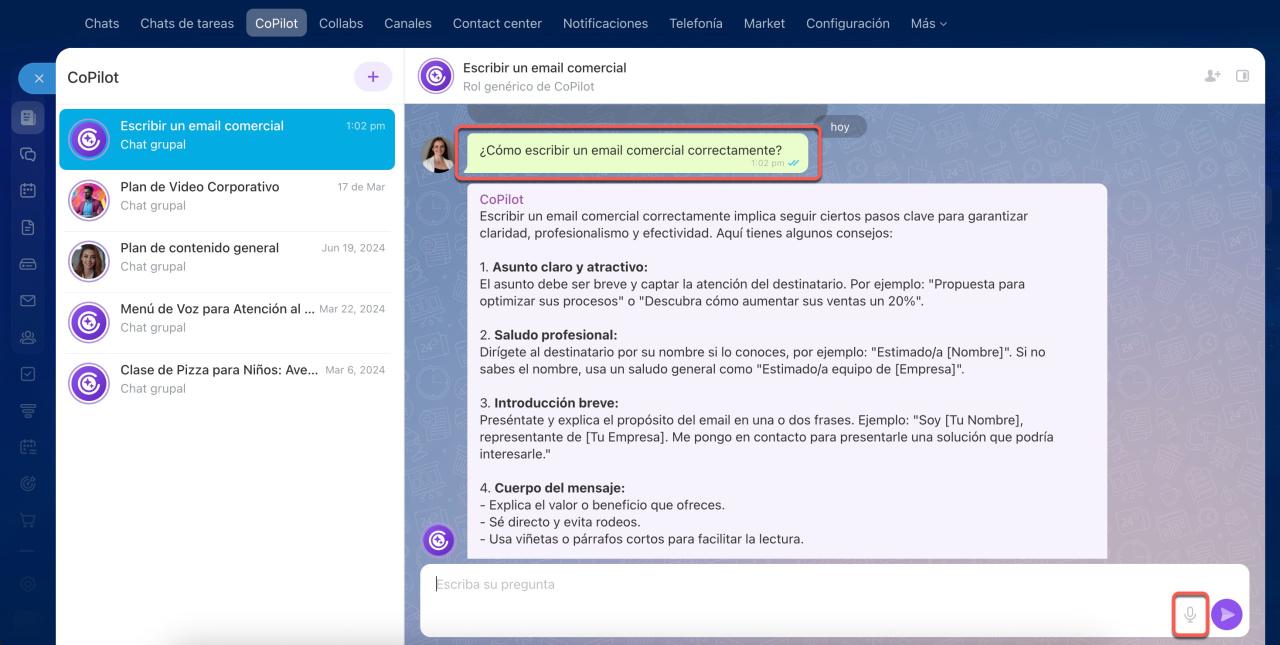

Beyond choosing language and voice, Copilot Studio allows for considerable fine-tuning. How does the agent sound? Thanks to the use of SSML (Speech Synthesis Markup Language). SSML works with HTML-like tags that wrap the text you want to modify; you can also combine it with voice tools such as Wispr Flow to turn your voice into workflows and productivity.

Among the most useful tags are (to insert external audio files), (to insert pauses), (to emphasize certain words), (to adjust pitch, speed and volume) and (to alternate speech languages within the same message when using a multilingual neural voice).

From the Copilot Studio interface, you can insert these tags directly into the Message or Question nodes in voice mode. The SSML tags menu allows you to insert the label template Then you simply circle the parts of the text you want to modify. You can combine multiple labels and customize different sections of the same message with different voice styles.

Transfers, integration with telephony and the future of real-time translation

Copilot Studio also makes it possible transfer calls to external numbers (PSTN or direct routing) already have human representatives. For external transfers, a specific node is configured where you specify the phone number and, optionally, a SIP UUI header with key-value pairs that can be read by external systems. Currently, these transfers do not support the use of variables in the number.

Looking a little further ahead, Microsoft is working on features for real-time voice translation in video calls from Teams, powered by Copilot. The idea is that a person's voice can be simulated and translated live into another language, so that the other participants hear another audio track, in another language, but with a timbre very similar to the original voice.

This function, designed primarily for companies and organizations that cannot afford an interpreterIt will initially offer a range of languages, including Spanish, English, German, French, Italian, Korean, Mandarin, Japanese, and Brazilian Portuguese. Before each call, users will need to give their consent and choose which language they want to be used.

Microsoft has emphasized that this is not a "deepfake" in the classic sense, since it doesn't simulate you speaking another language perfectly in a naturalistic way, but rather generates an alternative audio track that Respect your original voice as much as possible but translated. This line is part of Copilot's ongoing evolution towards experiences of smoother multilingual communication both in text and voice.

With all of the above, it's clear that changing Copilot's voice language isn't just a matter of menus, but of understanding it properly. Which languages does each component support (Studio, Microsoft 365, Contact Center)?This includes understanding how translations are managed and how variables such as User.Language, automatic language detection, and voice and SSML settings influence the process. Mastering these elements will allow you to build truly natural, multilingual Copilot experiences adapted to your work context, avoiding surprises with unsupported languages or messages that slip through in the wrong language.

Passionate writer about the world of bytes and technology in general. I love sharing my knowledge through writing, and that's what I'll do on this blog, show you all the most interesting things about gadgets, software, hardware, tech trends, and more. My goal is to help you navigate the digital world in a simple and entertaining way.