- Transforming a file into an intellectual dataset involves vectorizing the corpus, building relationship graphs, and connecting an AI assistant to explore it for ideas.

- The quality, location, and rigorous evaluation of the dataset are as crucial as the model architecture for obtaining reliable and contextualized AI.

- Data annotation, supported by specific tools and quality control processes, transforms the file into trainable raw material for multiple tasks.

- Personal files, open repositories, and simulated data are combined to create dataset ecosystems applicable to sectors such as health, transportation, finance, or education.

Convert a personal file into a living and useful intellectual dataset It's no longer just for large laboratories: anyone who has been producing digital content for years can transform that history into a navigable knowledge base and fuel for artificial intelligence systems. The interesting thing isn't just saving everything you've written in the suitable formatbut to make those pieces dialogue with each other, relate to each other and be able to be consulted for ideas, contexts or actors, beyond a simple search by keywords.

That jump from a static file to a curated, annotated, and searchable dataset This opens the door to uses ranging from a personal assistant trained on your own texts to the construction of linguistic models tailored to a specific culture or sector. In this article, we'll calmly and clearly explain what it means to treat a file as an intellectual dataset, how it's technically constructed, what translating or annotating it properly entails, what tools are involved, and in what real-world contexts it's already being used.

From scattered archive to navigable intellectual dataset

Imagine you've been writing articles about [topic/topic] for over a decade technology, regulation, privacy, or artificial intelligence on a single platform. You request a complete dump of your texts (a plain text file with thousands of documents) and receive it in a matter of minutes. On paper, that file is valuable: it encapsulates fourteen years of your thought process. But as long as it remains just unstructured text, you can do little more than... search by individual words.

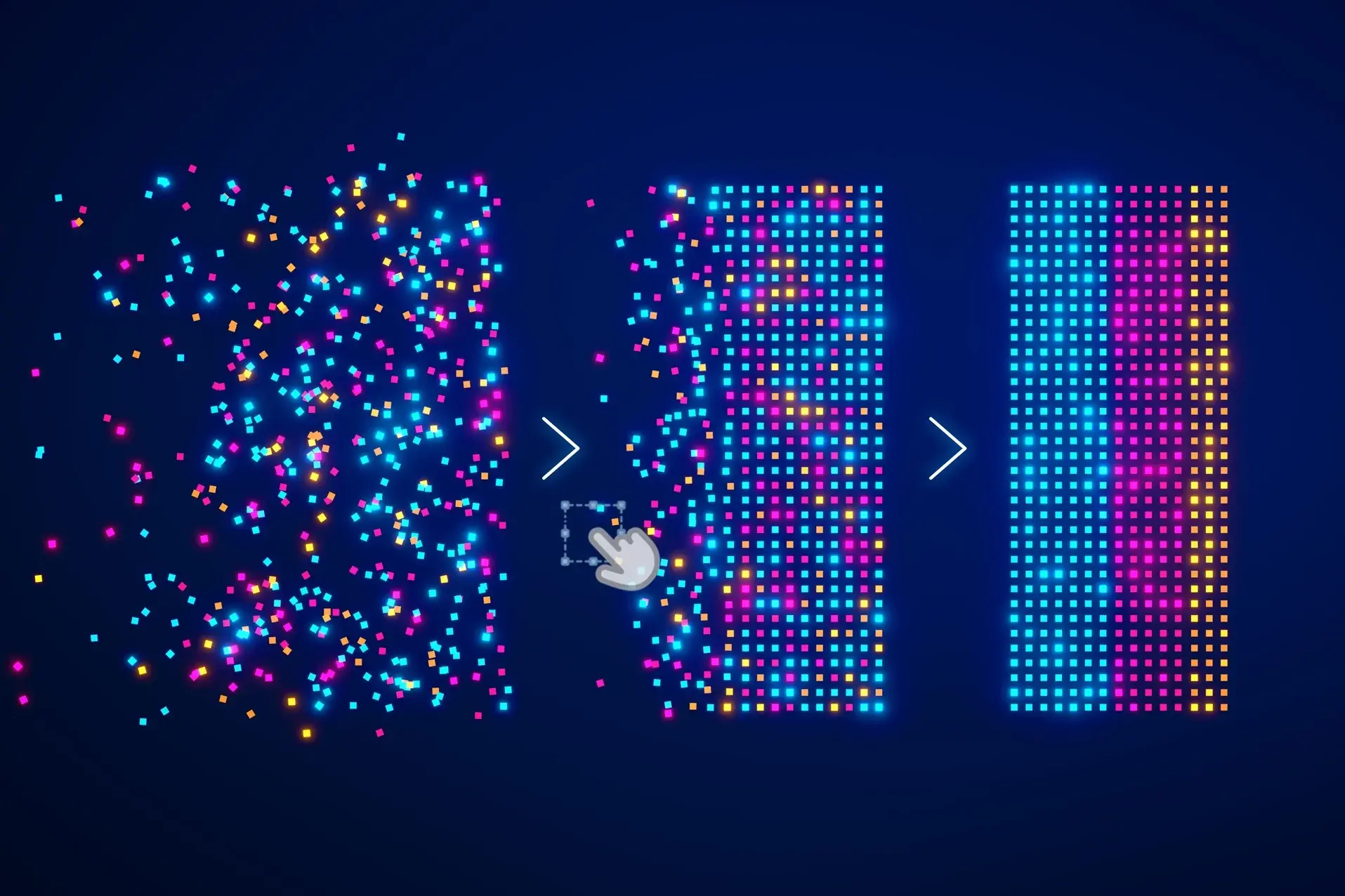

The first conceptual shift involves ceasing to view that file as a stack of documents and starting to treat it as a coherent corpus that can be indexed and exploredThe idea is to transform each article into a numerical representation that captures its meaning, store those vectors in a specialized database, and build upon them a graph of relationships and an assistant that intimately understands that semantic space.

In a real-world case study involving 4.209 articles written in English over fourteen years, the author used a model of multilingual embeddings (paraphrase-multilingual-mpnet-base-v2, from sentence-transformers) to convert each text into a 768-dimensional vector. These vectors were stored in ChromaDB, an open-source vector database. The entire process, run on a standard server without a GPU, was completed in about five minutes: the flat file was transformed into a continuous space of ideas.

In practice, this means that you no longer search "by keywords" but by concepts, questions, or text fragmentsYou can ask queries like "When did I start writing about technology monopolies?" or "Which articles are conceptually close to this argument?" and the system responds by locating the closest vectors in that semantic space, even if they don't share the same vocabulary. It's a very direct way to extend your memory about your own work.

A graph was also constructed on top of this vector layer using NetworkX, where each node represents an article and the edges connect texts that share thematic tags or exceed a certain threshold of semantic similarity. Visualized in tools like Gephi, the result is a map of your intellectual output organized into clustersRegulation, platforms, privacy, artificial intelligence, electric mobility… and also unexpected connections between texts separated by years but very close in ideas.

An agentic personal assistant as a gateway to the archive

The other key element of this approach is having a artificial intelligence assistant anchored to your dataset that can operate as a continuous collaborator, not just a simple chatbot in a browser tab. In the previous example, that assistant was named Bautista: an agentic instance based on Anthropic models (Claude Sonnet 4.6) but capable of working with other models depending on the API used.

Bautista resides on the author's own server and is orchestrated using an OpenClaw-like infrastructure platform, which manages persistent memory between sessions, communication channels (such as Telegram), scheduled tasks, and access to tools like the file system and script execution. Thanks to this, the assistant maintains continuity over timeIt remembers previous projects, preserves files, automates processes, and can act autonomously when instructed.

The difference compared to opening a generic template in the browser is that here we're not talking about just another chat, but something more like a embedded collaborator in your infrastructure, specialized in your own corpus and capable of acting as an intermediary between that vectorized file and your daily needs: locating texts, detecting redundancies, retrieving quotes, running specific analyses or launching maintenance scripts.

Furthermore, the system was designed to keep the archive alive without manual intervention. Every time the author publishes a new article on Medium and shares the full access link on Bluesky, a scheduled process queries the Bluesky public API daily, detects the new entry, downloads the text, generates its embed code, and adds it to ChromaDB. An automated pipeline ensures that the dataset updates itself., thanks to tasks of file indexingavoiding the classic problem of knowledge bases becoming obsolete because updating them is a chore.

In a later phase, new layers of analysis were added, such as the recognition of named entities (people, companies, technologies, countries, institutions) across the entire corpus, with the aim of studying the temporal evolution of mentions and co-occurrence networksThanks to this, it is possible to see when an actor like OpenAI appears in the texts, when Google+ disappears, or how different agents relate to each other over time, bringing the system closer to large-scale discourse analysis than to a simple personal archive.

Dataset, model and behavior: the file as raw material for AI

In the context of artificial intelligence, a A dataset is much more than a collection of dataThis is the training material that shapes the model's behavior. In natural language processing (NLP), this dataset usually takes the form of texts, dialogues, instructions, or annotations that allow models to learn to translate, summarize, converse, or classify emotions, among other tasks.

For years, the emphasis was placed almost exclusively on the model's architecture (number of parameters, type of layers, computing capacity), but recent experience has shown that the quality, diversity, and suitability of the training data They weigh as much as, or even more than, the model design. As studies such as those by Bender et al. or Paullada et al. point out, models are no better than the data that feeds them: if the dataset is biased, incomplete, or unrepresentative, the AI will reproduce those same flaws.

This change in perspective has driven a clear movement towards the quality over quantityIt's not just about collecting massive volumes of text, but about ensuring that this data is aligned with the language, regional variety, and cultural context in which the AI will be deployed (Blasi et al., Kreutzer et al.). This is especially relevant if we think of an archive as an intellectual dataset: an author's personal corpus can be very rich, but if it is then to be used for models that operate in other languages or markets, curation and adaptation become critical.

Furthermore, the dataset is not only useful for training, but also for Evaluate model performanceIf a model is evaluated using data it has already seen during training (data contamination), misleading metrics are obtained that mask its true limitations, as analyzed by Dong et al. and Samuel et al. Therefore, when transferring a personal file to an AI context, it is crucial to distinguish which parts are used for training, which for validation, and which for testing, as well as to design rich and challenging evaluation sets, rather than trivial tests that all models pass.

Translating and localizing datasets: much more than just transferring text from one language to another

When you want to reuse a file like Intellectual dataset in several languages or marketsA specific challenge then arises: the translation and localization of the corpus. At first glance, it may seem like just another translation project, but an AI dataset is often made up of isolated fragments, loose dialogues, short instructions, or structured data without a clear narrative context, which completely changes the rules of the game.

In many datasets we find very limited contextThese are phrases without information about who is speaking, the situation, or their intention. The translator has to infer pragmatic functions (a command, a doubt, an insult, irony) so that the model can properly learn the behavior in the target language. In addition, there are elements that must not be touched, such as code snippets, variables, placeholders, or technical labels, where a naive translation can break the dataset.

Mass consistency also comes into play: seemingly small decisions, such as choosing between "tú" or "usted," handling inclusive gender, or the treatment of certain Anglicisms, They are multiplied by millions of examples.An inconsistency that might go unnoticed in a book becomes noise here that the model internalizes. And, unlike in editorial translation, it's often best to keep grammatical errors or colloquialisms as they appear, because the goal isn't to "polish" the text, but to teach the AI how people actually express themselves.

Therefore, rather than translating, it is more appropriate to talk about locate datasetsThis involves adapting cultural references, names of institutions, brands, date formats, currencies, and units of measurement, and adjusting the register to the social norms of the target market. In large language models, this localization is what distinguishes an AI that simply "speaks Spanish" from one that understands cultural nuances, expectations of politeness, and local references.

Companies with a long history in software and content localization, such as imaxin, have been evolving towards translation and specific curation of datasetsCombining human expertise, finely tuned style guides, curated glossaries, and computer-assisted and machine translation tools, their approach resembles a data quality control process more than traditional translation: precise definition of linguistic and technical criteria, management of deliberate errors, use of automated QA, and statistical sampling to monitor consistency.

Annotated dataset: giving structure and meaning to the data

For a file to become a A truly useful intellectual dataset for supervised modelsIt is not enough to simply collect texts: they must be annotated. An annotated dataset is one in which each element (image, audio fragment, sentence, table) has been given metadata that describes its content or function: tags, categories, entities, relationships, transcripts, bounding boxes, etc.

In computer vision, for example, annotations can include global classification labels, bounding boxes around objects, segmentation masks that assign a category to each pixel, annotations of key points (human joints, facial points), or trajectories along a video. In text, we talk about entries of named entities (people, organizations, places), document classification, syntactic analysis, relationships between entities, or sentiment tags.

The goal of all this work is for the models to have clear examples of what they should recognize or predictA dataset of annotated images with cats, cars, and pedestrians allows training models that detect and classify these objects; a dataset of conversations with intent and emotion tags allows training chatbots and sentiment analysis systems; a documentary corpus with marked entities and explicit relationships lays the foundation for knowledge extraction systems.

Annotation is not limited to text or images: in audio, tasks include transcription, tagging sound events (applause, laughter, gunshots), segmentation by speaker, and timestamping of keywords. And in multimodal data (video with audio and subtitles, for example), work is done on aligning modalities and annotating interactions together (facial expression plus tone of voice plus textual content).

In the realm of structured and tabular data, annotating also means Explain the meaning of columns and valuesThis includes linking equivalent entries across databases or marking relevant attributes for prediction tasks. Each project type will choose one or another set of annotations, and often several are combined to cover complex use cases.

Tools and processes for annotating a file as a dataset

Recording data at scale requires specific tools and well-thought-out workflowsIt's not the same to manually label a few dozen images as it is to manage hundreds of thousands of examples in multiple formats with teams spread across several time zones.

In computer vision, open-source solutions such as LabelImg (for bounding boxes), CVAT (for complex image and video annotation with collaborative features), or commercial platforms like Labelbox or SuperAnnotate are used, incorporating quality analytics, project management, and automation. For text, tools like Prodigy, LightTag, BRAT, or Datasaur facilitate the annotation of entities, classifications, syntactic dependencies, and relationships, with detailed tracking of annotators and conflicts.

In audio, software like Label Studio, Praat, or Sonix allows for precise segmentation, transcription, and labeling of sounds. For multimodal data, lightweight projects such as the VGG Image Annotator (VIA) or applications like RectLabel offer a versatile foundation for synchronizing annotations across data types. And for projects requiring high volume and speed, platforms like automation and massive collaboration such as Amazon SageMaker Ground Truth, Scale AI, DataLoop or collaborative solutions such as Digma or Hive Data.

Choosing a tool isn't just a matter of personal preference: you need to consider the type of data, budget, team size, the need for real-time collaboration, and the size of the dataset. Almost all advanced platforms include features for validating basic rules, managing conflicts, measuring productivity and quality, and combining human annotation with automated suggestions generated by pre-existing models.

Beyond the tool itself, quality is determined by the process. Providing very clear and up-to-date annotation guidesThis involves training annotators (especially in sensitive fields like medicine), organizing cross-reviews, defining quality metrics (inter-annotator agreement, accuracy, recall), and using expert-validated "gold standard" data to calibrate the team. Annotating a file as an intellectual dataset is not a sprint, but a continuous, iterative cycle.

Many projects opt for a hybrid approach: simple or repetitive tasks (e.g., preliminary object detection or text pre-classification) are automated, and human work is reserved for ambiguous, complex, or high-impact casesThis reduces costs without sacrificing quality and allows scorers to focus where they add the most value.

Real-world applications of annotated and intellectual datasets

Treating a file as an intellectual dataset is not a theoretical exercise: it has very concrete applications in multiple sectors where annotation and curation are key to deploying reliable AI. In computer vision, for example, annotated image and video sets allow the development of facial recognition systems, anomaly detection in X-rays or MRIs, automated factory inspections, or autonomous vehicles that They identify pedestrians, signs, and obstacles risk management.

In natural language processing, well-localized and annotated datasets enable tasks such as sentiment analysis on social media, classification of queries in customer service, entity extraction in legal documents, machine translation, or training chatbots that understand emotional nuances and cultural contextsA personal archive of articles can become the basis of a specialized assistant who, before publishing something new, reviews what has already been said, detects repetitions, or identifies changes in posture over the years.

Health and biotechnology increasingly rely on highly annotated datasets: medical images with confirmed diagnoses, structured clinical records, and genomic sequences labeled with relevant mutations. In automotive and transportation, data is essential for autonomous driving, intelligent route planning, and traffic management. In commerce and banking, it is used for Product recommenders, fraud detection, and risk analysis.

Other fields such as precision agriculture, the environment, security and defense, video games, virtual reality, education, and scientific research use annotated datasets to monitor crops, analyze satellite images, create immersive experiences, personalize learning, or accelerate discoveries in biology or astrophysics. In all of them, the logic is similar: The better structured and documented (for example, with README files) The better the dataset, the better the models designed on it will be..

If we view the file as an intellectual dataset in a personal key, the value lies in power consult on the fly everything you know and have writtenUnderstanding how your ideas have evolved, discovering thematic gaps, connecting texts from distant periods, and leveraging that extended memory in other contexts, such as teaching, research, or business strategy. All this without delegating the writing to the machine, but rather using AI as a support tool to verify data, search for references, or test arguments.

Where to find datasets and how their creation is being automated

Not all datasets originate from personal files: many come from open repositories and public sources These resources are useful for training and evaluating models, or for research projects, data journalism, and strategic analysis. The internet already offers a wealth of resources that can be leveraged, always with due legal and ethical considerations.

The social network X (formerly Twitter), for example, offers APIs that allow users to collect tweets filtered by hashtags and other criteria. This data can then be structured into tables and visualized with tools like Tableau. Google Dataset Search provides a specialized search engine for public datasets, where users can find everything from enterprise databases to industry statistics. Blogs like FiveThirtyEight publish their datasets on politics, sports, and society so that anyone can replicate or extend their analyses.

In parallel, they are being used more and more simulated environments to automatically generate annotated dataEspecially in fields like autonomous vehicles, where recreating all the conditions of reality in the physical world would be slow, expensive, and dangerous. Simulators allow the production of images and videos with perfect annotations (positions of objects, weather conditions, trajectories) and the exploration of extreme scenarios that are difficult to capture in real-world data.

The future points to a combination of curated real-world data, personal archives treated as intellectual corpora, and synthetic data generated in simulation, all coordinated with supervised, semi-supervised, unsupervised, and self-supervised learning techniques. Annotation will remain a key element, but increasingly assisted by models that They propose labels, detect inconsistencies, and measure biases.leaving the finer decisions to the people.

In this context, the ethical and regulatory dimension becomes important: handling personal files as datasets requires respecting privacy, copyright and usage expectations, while crowdsourcing or mass collaboration platforms must ensure fair conditions for annotators and transparency about which models are trained with the data they generate.

When this entire chain is carefully managed—from extracting a personal file to vectorizing, graphing, locating, annotating, automating updates, and connecting it to AI assistants—you obtain something that very few authors, companies, or organizations have today: a deep, consistent and live intellectual datasetcapable of powering artificial intelligence systems truly aligned with the voice, context, and goals of those who drive them, and of serving as extended memory in an increasingly information-saturated world.

Passionate writer about the world of bytes and technology in general. I love sharing my knowledge through writing, and that's what I'll do on this blog, show you all the most interesting things about gadgets, software, hardware, tech trends, and more. My goal is to help you navigate the digital world in a simple and entertaining way.