- Exascale computing allows at least 10^18 operations per second, far surpassing peta-scale supercomputers.

- Its architecture is based on millions of cores, heterogeneous processors, and ultra-high-speed memory and network systems to process data in parallel.

- The exascale drives advances in climate, medicine, industry, finance, AI and national security, with a direct impact on science and the economy.

- Its main challenges are energy consumption, the development of scalable software, massive data management, and ethical and environmental dilemmas.

La exascale computing It marks a turning point in the history of computers. We're talking about machines capable of doing in one second what would take a regular laptop decades, something that a few years ago sounded almost like science fiction and that today is already a reality in several supercomputing centers around the world.

This new league of the high-performance supercomputing It doesn't just affect scientists and large laboratories: it has direct implications for industry, the economy, health, climate, and security. From accelerating drug design to improving weather forecasts, train gigantic artificial intelligence modelsexascale has become a key piece of the global digital infrastructure.

What exactly is exascale computing?

When we talk about exascale, we are referring to systems capable of executing at least an exaflop of performance, that is, ten to the power of eighteen floating-point operations per second (1018). In other words, a quintillion of transactions every second, something difficult to imagine if we don't compare it to what we have at home.

To give you an idea, an exascale supercomputer can match the combined power of hundreds of thousands of laptops operating simultaneously. Depending on the specific system, estimates suggest equivalencies of over 100.000 computers, in some cases exceeding the computing power of approximately 270.000 personal devices in maximum load situations.

This leap in performance is based on the philosophy of high performance computing (HPC)This involves grouping thousands or millions of interconnected computing resources and having them work in parallel on the same problem. This massive sum of power, when well coordinated, is what allows the execution of simulations and models so complex that a conventional computer couldn't even begin to solve them in a reasonable timeframe.

The exascale thus marks the next stage after supercomputers of PETA scale, which were in the petaflop range (1015 operations per second). After thoroughly testing that generation, the scientific community discovered that computing needs continued to grow at a brutal rate, driven by the avalanche of data and increasingly sophisticated problems.

A bit of history: from ENIAC to exascale giants

To understand the leap we've made, it's helpful to look back. ENIACThe M1, considered the first general-purpose electronic computer, was unveiled in 1946 in the United States. It weighed approximately 27 tons, occupied around 167 square meters, and was capable of performing some 5.000 operations per second, a figure that today would seem ridiculous compared to any low-end laptop.

Modern personal computers operate with capabilities in the orbit of gigaflopThat is, billions of operations per second. In just a few decades, we've gone from giant vacuum tube computers that filled an entire room to portable devices that fit in a backpack and surpass the performance of those pioneers by millions of times.

In the field of supercomputing, the progress has been even more spectacular. The power of the systems of HPC has multiplied by approximately ten every four years over the last three decades. This exponential growth has been the driving force that has taken us, step by step, from the first vector supercomputers to the current heterogeneous monsters with millions of computing cores.

The exascale milestone was reached in 2022 with Frontier, installed at Oak Ridge National Laboratory (United States). This system was the first to officially break the sustained exaflop barrier, opening the door to a new era of scientific and industrial computing and becoming the global benchmark for performance.

Very soon after, the race continued to pick up the pace. According to the TOP500 ranking, the supercomputer called El capitán It has established itself as one of the current giants, with around 1,7 exaflops of sustained performance and close to 2,7 exaflops of maximum theoretical power. The United States dominates the top of the rankings, although Europe has several teams among the top tenWhile China, despite its enormous progress, tends not to publish all of its developments for strategic reasons.

How an exascale supercomputer works

An exascale system is not simply a single giant computer. It is a massive array of interconnected computing nodesEach with its own CPU, GPU, and memory, working in a coordinated manner on the same problem. The key lies in the extreme parallel processing, which allows you to divide a huge task into thousands or millions of subtasks that run simultaneously.

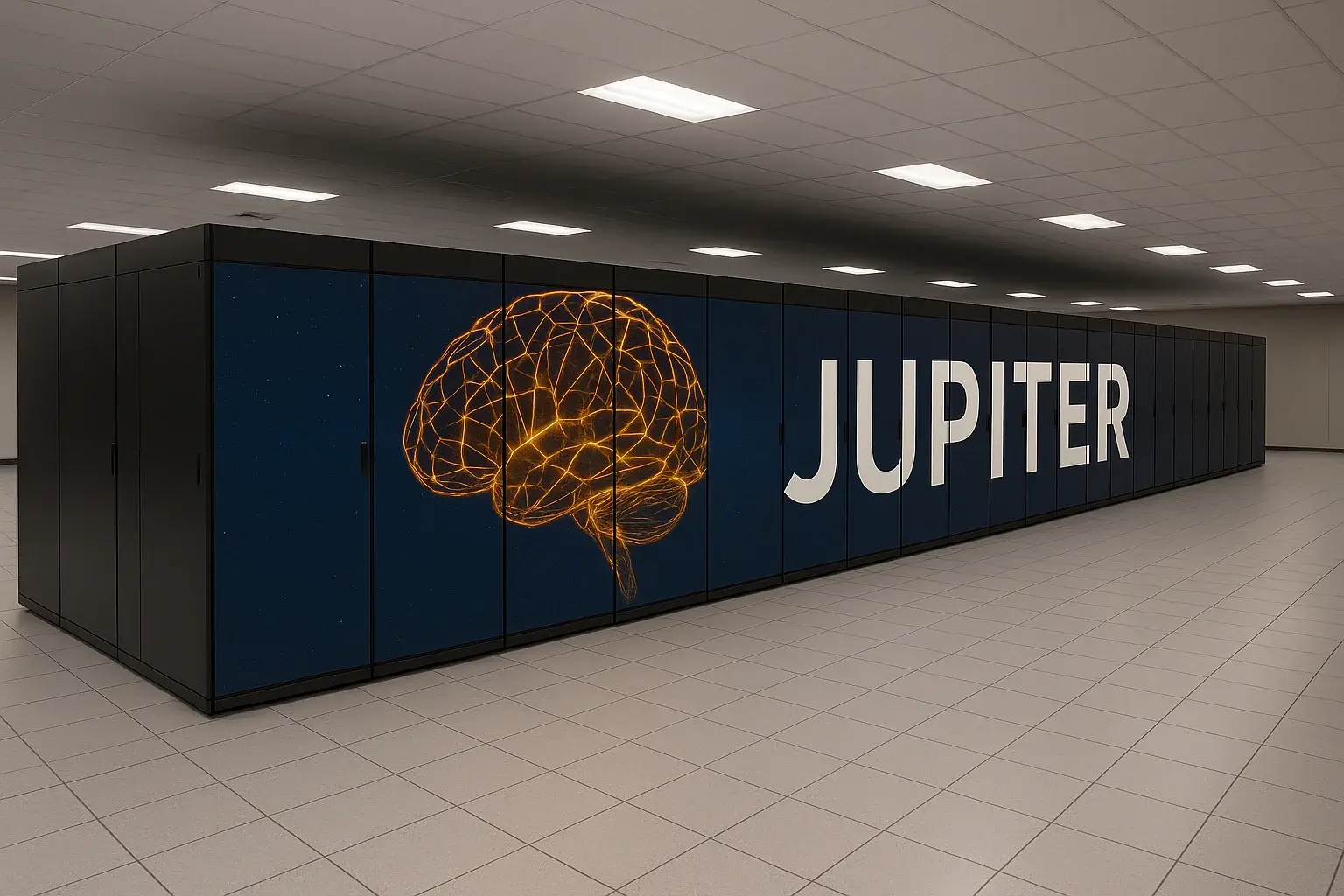

The architecture of supercomputers such as Jupiter in Germany or LUMI In Finland, the system is based on this idea: many specialized nodes, with extremely high-speed interconnection networks, carefully designed hierarchical memories, and advanced cooling and power systems. Only with this type of design is it possible for a supercomputer to be capable of solve a “superproblem” breaking it down into small pieces and processing them in parallel.

These types of machines usually combine traditional CPUs with GPUs and other accelerators like CUDA XCPUs handle control tasks, sequential processes, or tasks that are less easily parallelized, while GPUs, and in some cases FPGAs, take on the burden of massive calculations that can be executed in parallel. This mix of processors constitutes what is known as a heterogeneous architecture, essential for achieving maximum performance within reasonable power consumption limits.

Another critical piece is the high-speed interconnectionIt's not enough to have many nodes; they need to communicate with each other with very low latency and enormous bandwidth. Otherwise, the system spends more time waiting for data to arrive than actually performing calculations. That's why manufacturers develop network technologies specifically designed for HPC, with topologies and protocols fine-tuned to perfection.

Finally, the memory and storage system is designed hierarchically: very fast but small memories near the processor (cache), larger but somewhat slower main memories, and secondary and tertiary storage levels (such as SSDs and tapes) where gigantic datasets and simulation results are stored. This combination allows keep "hot" data close to the processor, while the rest is stored more efficiently in cheaper layers.

Technical challenges of the exascale

Building and operating an exascale supercomputer is not simply a matter of stacking more hardware. A number of factors come into play. enormous technical challenges related to energy consumption, software design, reliability or data management that require innovation on many fronts.

Energy efficiency and cooling

One of the most serious problems is the enormous electricity consumption of these systems. Cutting-edge supercomputers already operate in the range of several megawatts of power, comparable to the consumption of thousands of homes. A naive leap into the exascale could have driven those numbers to unsustainable levels, both economically and environmentally.

To avoid this, designers have been forced to develop much more efficient architectures in terms of performance per watt. The latest generation GPUsCPUs optimized for HPC and power supply and distribution systems have evolved to increasingly tighten the relationship between computing power and consumption.

Cooling is another key element. An exascale supercomputer generates a tremendous amount of heat that must be dissipated safely. That's why solutions like the direct liquid cooling or the use of data centers in locations with cold climates. In cases like LUMI in Finland, even waste heat is used to power the network. district heating networksreducing environmental impact and improving overall efficiency.

Software, extreme parallelism, and fault tolerance

Hardware alone is not enough. An equally big challenge is development scalable software to millions of computing cores efficiently. Traditional programming models fall short when threads and processes are multiplied to these levels.

Programmers must design algorithms with a massive parallelismMinimizing communication between nodes, balancing loads, and managing memory effectively prevent the system from becoming overwhelmed by its own complexity. This requires new libraries, runtime environments, compilers, and performance analysis tools specifically designed for exascale computing.

In addition to this, there is the need to Fault toleranceWith so many components, it's virtually certain that an exascale supercomputer will experience some hardware failure frequently. The software must be prepared to detect errors, recover data, and continue running tasks without having to restart simulations from scratch, simulations that can take days.

Data storage and management architecture

Exascale simulations generate and consume astronomical amounts of information. A numerical experiment can produce petabytes of data that must be stored, organized, and made available to researchers. That's why storage architecture is just as important as computing architecture.

Supercomputing centers are implementing hierarchical storage systems These systems combine very fast memory (such as NVMe and SSDs) with high-capacity solutions (hard drives, tapes, scientific cloud storage, etc.). They also incorporate compression, deduplication, and intelligent file management techniques to reduce storage space and accelerate data access.

Main areas of application of the exascale

The computing power of exascale computing translates into concrete applications in virtually every field with a high degree of digitization. Each sector finds in these systems a tool for tackle problems that were previously intractable or at least, they had been around for too long to be practical.

Scientific research and climate modeling

One of the biggest beneficiaries is the field of climate researchEarth system models require simulating the atmosphere, oceans, cryosphere, biosphere, and their interactions at multiple spatial and temporal scales. Exascale computers allow for the generation of much more detailed climate models, with finer resolutions and longer time horizons.

Thanks to this capability, it is possible predict extreme weather events More precisely, to study climate change scenarios at a regional level and virtually experiment with different mitigation policies. Projects such as Destination Earth They aim to create a digital twin of the planetA high-fidelity global climate model to support decision-making in governments, businesses, and international organizations.

In other scientific fields, exascale supercomputers allow the simulation of processes ranging from the from the subatomic scale to the cosmologicalIn astrophysics, for example, the formation of galaxies, the behavior of black holes, or stellar explosions can be reproduced with a level of detail and realism that was previously impossible.

Materials science, genomics and medicine

The exascale is also revolutionizing the materials scienceThis allows researchers to study the behavior of new alloys, compounds, and structures at the atomic level. This accelerates the design of stronger, lighter, or more efficient materials for sectors such as energy, transportation, and construction.

In the biomedical field, supercomputers have played a key role, for example, in the development of vaccines against COVID-19The ability to analyze huge genomic databases, simulate molecular interactions, and test thousands of potential compounds in virtual models drastically reduces the time it takes to discover new drugs and treatments.

Exascale systems also allow progress towards personalized medicinewhere treatments tailored to each patient's genetic and clinical profile can be simulated. European projects involving supercomputing centers like the one in Barcelona are investigating the use of supercomputers and artificial intelligence to improve the diagnosis and treatment of various types of cancer.

Industry, economics and process simulation

Outside the laboratory, companies use the exascale to optimize production processesto design more competitive products and reduce costs. In the automotive industry, for example, these systems are used to simulate vehicles under all kinds of conditions, study aerodynamics, energy efficiency, and the behavior of new engines.

A striking example is that of projects like those of GE Aerospacewhere supercomputers are used to analyze jet engine designs with the aim of reducing CO2 emissions2 up to 20%. These types of simulations would have been unthinkable without the computing power and level of detail offered first by the petascale and now by the exascale.

In the financial sector, the power of these systems allows simulate financial services and products Under diverse regulatory and market scenarios, we analyze complex risks and conduct sensitivity studies with a depth exceeding traditional techniques. This helps large banks, insurers, and investment funds make better-informed decisions.

In addition, many technology providers already offer supercomputing in a HPC as a ServiceThat is, as a cloud service. In this way, companies that cannot afford their own supercomputer can access exascale resources on demand to accelerate their design, simulation, or data analysis projects.

Artificial intelligence and machine learning

La Artificial Intelligence And, in particular, deep learning, are other major drivers of exascale computing. Current AI models—from natural language processing systems to computer vision algorithms—require enormous volumes of data and colossal computing power to train.

Exascale computing allows train neural networks of gigantic dimensionsThis allows for exploring more complex model architectures and significantly reducing the time needed to deploy new generations of algorithms. This opens the door to radical improvements in fields such as speech recognition, machine translation, autonomous driving, and recommendation systems.

By combining exascale with large datasets, machine learning algorithms can learn patterns more quickly and accurately, which translates into smarter and more useful systems for society and the economy. At the same time, debates arise about the best way to regulate and ethically use these advances.

National security and cyber defense

In the field of security, exascale supercomputers are used to simulate nuclear weapons and other defense systems without the need for real-world testing, something of great importance from a geopolitical and non-proliferation point of view.

They are also used in advanced cryptanalysisThat is, in the study and potential breaking of complex ciphers, as well as in the design of new cryptographic techniques resistant to attacks with supercomputers and even future quantum computers.

Furthermore, the exascale is applied in the ciberseguridad To analyze large volumes of network traffic, detect anomalous patterns, and respond to advanced attacks. The ability to process data in near real-time is essential for reacting quickly to sophisticated and persistent threats.

#ExascaleDay and the social dimension of the exascale

The call #ExascaleDay It is celebrated internationally as a kind of big day for the supercomputing community. This date commemorates the leap from petascale to exascale and pays tribute to the scientists, engineers, and technicians who have spent years pushing the boundaries of computing.

The idea of exascale supercomputers began to take shape more than a decade ago, when petascale systems started to fall short for certain lines of research. It became clear that, to fully understand systems as complex as the human body at multiple scales —from inside a cell to entire organs— or to model weather patterns from meters to thousands of kilometers, a qualitative leap in power was needed.

Since the launch of Frontier as the first exascale operating system, researchers have achieved spectacular milestones. For example, the Time prediction By simulating a whole year's worth of climate data in just one day, millions of biomedical publications have been made accessible through online searches to accelerate diagnoses and treatments, and tens of thousands of variants of the COVID-19 virus have been analyzed, reducing the computation time from a week to about 24 hours.

These kinds of results show how the exascale is not a technical curiosity reserved for a few research centers, but a infrastructure with direct impact in everyday life, from public health to responding to climate emergencies.

The international race for exascale leadership

Exascale supercomputing has become a field of economic and geopolitical competitionThe United States, China, Japan, and the European Union invest billions in the development of these infrastructures because they consider them strategic for science, industry, and national security, as well as for the semiconductor sector.

The United States currently leads the rankings with systems like Frontier and El Capitan, in addition to having a long tradition with supercomputers such as Summit or SierraJapan, for its part, has stood out with Fugaku, a reference supercomputer with hundreds of petaflops also geared towards artificial intelligence applications and large-scale simulations.

China has had iconic systems such as Sunway TaihuLightKnown for its high energy efficiency, it is known to continue developing highly advanced supercomputers, although not all of them are publicly disclosed. Europe is promoting initiatives such as EuroHPC to build a competitive network of supercomputing centers and foster a unique industrial and scientific ecosystem around the exascale.

In the European continent, facilities such as the supercomputer stand out. hawk In Germany, it is used, among other things, for simulations in the automotive industry, or projects such as Jupiter, an exascale system scheduled to be operational by the end of 2024 that will strengthen Europe's role in this field.

Social, economic and ethical impact of the exascale

Beyond performance records, the exascale raises a number of social and ethical questions These are not trivial matters. One of the main debates revolves around how to ensure that the benefits of these technologies are distributed equitably and are not concentrated in a few countries or large corporations.

There is also concern about the environmental impact of building and maintaining data centers that consume so much energy. Although progress is being made in efficiency and the use of renewable energy, the carbon footprint and resource consumption remain variables that must be carefully monitored if we want exascale computing to fit into a sustainable development model.

In the field of artificial intelligence, exascale technology amplifies the ability to train massive models, which can lead to very powerful applications with implications for privacy, surveillance, and automated decision-making. Therefore, it is essential to accompany these advances with regulatory frameworks and public debates about the types of uses we want to encourage or limit.

In parallel, the exascale opens clear opportunities to address critical global challenges of the 21st century: from better modeling climate change and pandemics to designing more resilient infrastructure and improving communications security. The key will be to direct the enormous available computing power towards projects that provide real social value.

Looking at the whole picture, exascale computing represents a giant leap in computing power that is already translating into major scientific, industrial, and medical advances; at the same time, it forces us to rethink how we manage energy, data, security, and ethics in a world where supercomputers are capable of performing a quintillion operations per second and to influence, silently but decisively, the way we live and work.

Passionate writer about the world of bytes and technology in general. I love sharing my knowledge through writing, and that's what I'll do on this blog, show you all the most interesting things about gadgets, software, hardware, tech trends, and more. My goal is to help you navigate the digital world in a simple and entertaining way.