- The same prompts produce different results in Gemini, Copilot, and ChatGPT due to differences in model, integration, and product design.

- The accuracy, timeliness, and reliability of the responses depend on how you formulate the context, format, and level of rigor for each participant.

- Choosing the right type of model (fast or reasoning) and the right tool for each task is as important as the prompt itself.

- The best strategy is to test the same prompt in several assistants, compare clarity, actionability, and consistency, and assign each one to the use cases where it performs best.

La way to write a prompt It doesn't work the same way in ChatGPT, Gemini, and Copilot.Underlying this is the idea of a "language model," but each has its own logic, memory, integrations, and even quirks. Therefore, a message that works wonders for one might elicit a weak or confusing response from another.

If you create content, program, study, or simply want get more out of them, understand how the model's behavior changes according to the wizard This is key. Here you'll see, in great detail, the differences between prompts for Gemini, Copilot, and ChatGPT, what each one does "under the hood," how to fairly compare them, and what kind of instructions work best in each case.

What is the difference between ChatGPT, Gemini, and Copilot at the model level?

The assistant you use is not the same as the model that respondsThis distinction is important to understand why the same prompt behaves differently depending on the tool.

ChatGPT It is an OpenAI product and is based on the GPT model family (up to GPT-5 in the most advanced versions). Their approach is generalistConversation, writing, coding, explaining concepts, creativity, etc. In the paid version you can choose different GPT-5 modes with more or less internal "thinking", which affects the depth of reasoning and response time.

GeminiGoogle's uses models from the series Gemini 2.5 (Flash and Pro) And, what's more, you can learn faster with Gemini GemsFlash is designed to be very fast and lightweight, while Pro prioritizes reasoning, mathematics, programming, and complex tasksIn addition, Gemini handles text, images, and other formats (multimodality) particularly well, and integrates directly with Google Workspace and Google Search.

Microsoft Copilot It does not have its own model in the strict sense: uses OpenAI models (GPT-4 or GPT-5) It also adds an orchestration layer called Prometheus. This layer connects the model to Bing, your business data in Microsoft 365 (emails, OneDrive, SharePoint, Teams), and other signals from the Microsoft ecosystem, which significantly influences the type of prompt that best suits it.

In addition to these three, there are other assistants such as Claude, Perplexity or Grokwhich also influence how you design prompts when working with multiple tools in parallel. But if we focus on Gemini, Copilot, and ChatGPT, the key idea is that Each one combines: model, integration, and a distinct product layerThat's why the same instruction doesn't always yield the same results.

How they understand your query: classic search vs conversational AI

When you compare “searching on Google” with “asking a chatbot”, you are actually comparing two radically different ways of processing your textThat directly impacts how you should write the prompt.

A classic search engine like Google Search crawls and indexes web pages And when you make a query, it aligns your keywords with documents it already knows. It doesn't fully interpret your intent, it simply It matches terms and returns links.Then it's up to you to go to several pages, dodge ads, and compare information to draw your own conclusions.

In contrast, ChatGPT, Gemini, and Copilot are conversational language modelsTheir goal isn't to show you a list of results, but to directly generate a response. First, they try to understand your intent, then they detect the keywords and, depending on the case, They combine trained knowledge with web research to build a new text that answers what you ask.

That's why, with generative AI, it makes much more sense to use prompts in natural language, with context and clear objectivesInstead of "best phone 2024," something like "act like a smartphone expert, compare 5 current models for night photography with a maximum budget of €500" works better. That way of speaking is much more suited to ChatGPT, Gemini, or Copilot than to the classic search engine.

Furthermore, these assistants don't just work with text: They can return tables, step lists, long summaries, or video scripts.This is something a traditional search engine doesn't do directly. The structure of what they generate will depend heavily on how well you structure your prompt.

Information updates and accuracy based on AI

One of the biggest fears when using AI is whether what it says is up-to-date. There are important nuances to consider here. Gemini, Copilot and ChatGPT, and that determines how much you need to specify in the prompt.

When you browse with Google, the Updates depend on each websiteIf the site is up-to-date, you'll see the latest version; otherwise, it doesn't matter how modern the search engine is, the information will still be outdated. Your search engine prompt essentially boils down to choosing good keywords.

Things are different with AI assistants. If the model connects to the Internet in real timeIt can retrieve recent content, but not always minute by minute. Gemini and Copilot tend to rely heavily on updated web data, while ChatGPT has gradually incorporated navigation into the model, closing the gap it had when it only used older training data.

What does this mean for your prompts? That It makes sense to explicitly ask for recent dataPhrases like “with information updated to 2024”, “use recent sources and add links” or “indicate if you are unsure of the date of the data” help AI to better guide the search and be honest about its time limits.

Furthermore, all these systems can suffer hallucinationsIncorrect answers, but expressed with great confidence. That's why a good prompt in Gemini, Copilot, or ChatGPT usually includes explicit requests for quotes or references, such as “indicate the URLs from which you get the information” or “clearly separate proven facts from hypotheses or assumptions”.

Differences in ease of use and experience when writing prompts

The “ease of use” of each assistant is not just about interface design: it also affects how much effort do you have to put into the prompt to get what you want.

In a traditional search engine, you control the navigation: You choose which page you enter, which tabs you open, and how you combine fonts.The prompt is limited to a few words and shortcuts such as quotation marks, Boolean operators, etc. It is a more manual and often slower process, but with more fine control over the results.

Conversational AI, on the other hand, You eliminate intermediate stepsYou ask a question and get a pre-digested summary in return. That's fantastic when you get the prompt right, but it forces you to be clearer about your objective: desired length, format, tone, language, target audience, etc. A common mistake is asking vague questions and then blaming the model when they give a generic answer.

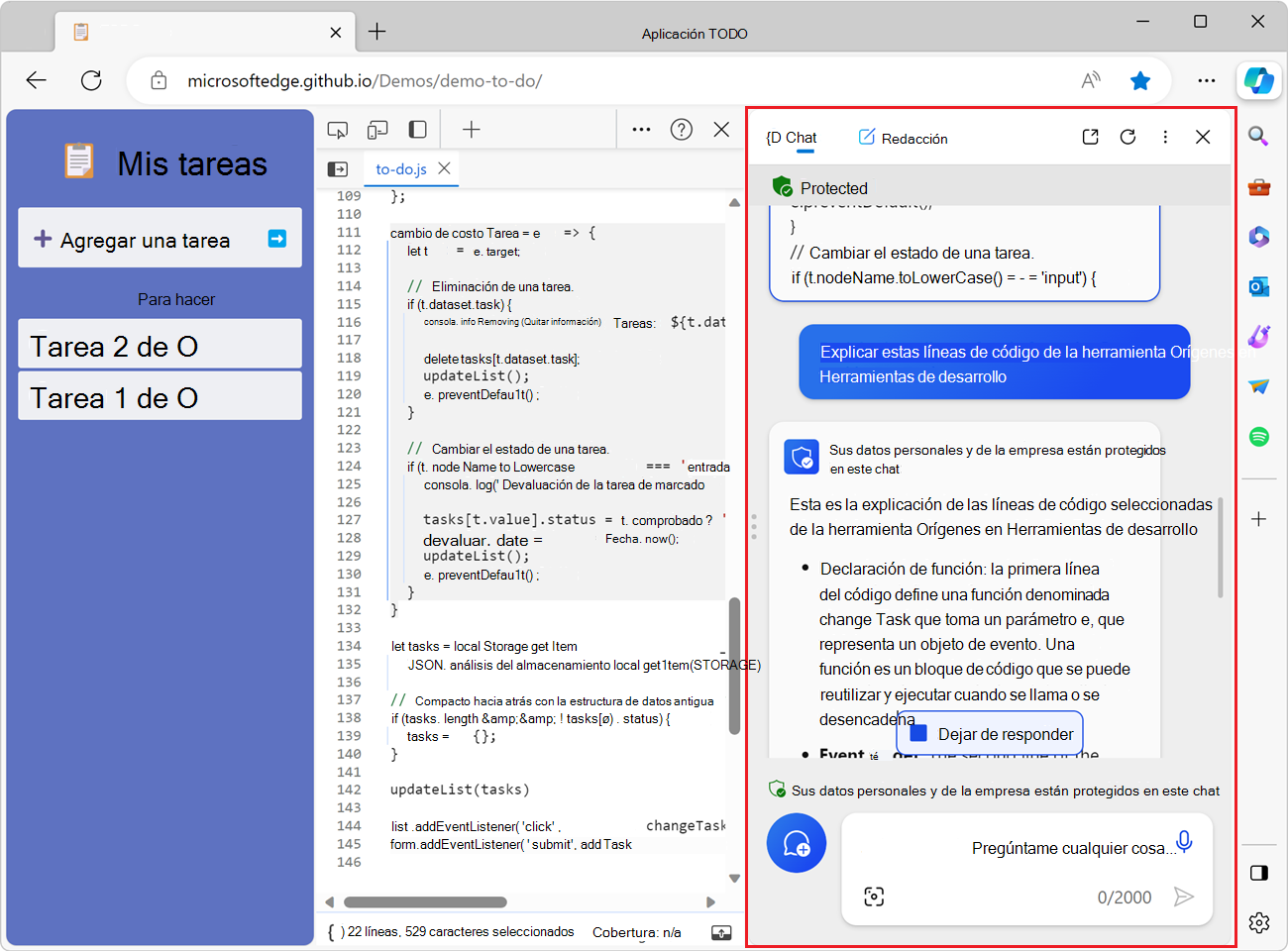

At this point, there are nuances between tools. Copilot shines when you use it within Word, Excel, Outlook, or Teams en Microsoft 365The prompt can be very short (“summarize this meeting”, “make a report with this data”) because the context is obtained from the documents themselves. Gemini does something similar within Google Workspace, better understanding your Docs, Sheets and Gmail. ChatGPT, on the other hand, usually requires you to paste the context yourself. (or that you upload files), but in return it offers a lot of flexibility in the style and format of the response.

Ultimately, the ease with which you write prompts also depends on your thinking style: if you're used to writing long, structured instructions, ChatGPT and Gemini reward that effortIf you prefer to request quick things related to what you already have open in Word or Excel, Copilot makes the job much easier..

Reliability of responses and how to protect yourself with good prompts

Reliability is where it's most noticeable. A good prompt can make all the difference Between a useful text and an elegant blunder. Here, the technical limitations of the model are mixed with the way you phrase the request.

With Google Search, the responsibility lies with you: You choose sources, compare versions, and detect unreliable pages.Google uses algorithms to assess authority and relevance, but dubious websites that have "tricked" the algorithm still manage to slip through. Your critical thinking skills are worth more than any keyword.

With ChatGPT, Gemini, and Copilot, AI does that filtering for you: scrapes information from various sources and rewrites it into a unified responseThe problem is that it can mix correct data with errors or only accept a partial version. That's what we call hallucinations: erroneous answers that the model presents as if they were completely valid.

To reduce risk, it's a good idea to add phrases like these to your prompts “If you’re not sure, say so.”“Cite at least 3 verified sources,” “mark anything speculative with a warning,” or “don’t make up data, limit your answer to what you can back up with sources.” It doesn’t eliminate it completely, but it pushes the model to be somewhat more conservative.

It also helps a lot specify the level of rigor you wantIt's not the same to ask someone to "explain quantum computing to me as if I were a 10-year-old" as it is to ask them to "give me an academic explanation with references to papers." If the prompt is incomplete, the answer will fall into the comfortable territory of generalities, where it's easier for an inaccurate detail to slip in without you noticing.

Practical examples: how responses change with the same prompt

When you put ChatGPT, Gemini, and Copilot in front of the same prompt, clear differences appear in depth, format, creativity and ability to synthesizeSeveral comparative analyses and reproducible experiments show fairly consistent patterns.

For example, if you ask “Explain to me what quantum computing is in exactly 100 words”You're measuring clarity, conciseness, and adherence to restrictions. Some models meet these criteria perfectly, while others fall a couple of words short or cut too much content. The latest ChatGPT and Gemini models tend to excel here, while Copilot, depending on the version, can be somewhat more lenient with the word count.

In tasks of summary and reformulationFor requests like “give me a 3-point summary of this text” or “rewrite this paragraph in an academic tone without changing the meaning,” all three handle it well, but with nuances. ChatGPT tends to offer very readable summaries, Gemini usually respects the original logical structure more, and Copilot prioritizes corporate style when used within Microsoft 365.

When you entrust them email review or professional rewritingPrompts such as “fix this email by correcting spelling and grammar” or “make it more polite but very assertive and professional, asking for read receipts” are used to gauge linguistic sensitivity. ChatGPT and Claude (when compared to them) excel in nuances of tone; Gemini and Copilot tend to be somewhat more formal, which can be perfect for a business environment.

En pure creativityFor example, if you ask "give me 10 original ideas for using technology in the primary classroom without resorting to topics like using tablets," ChatGPT and Copilot usually generate proposals with more narrative texture and emotional references, while Gemini, in many cases, offers something more basic: genre, setting and a couple of elements, but leaves the details up to you.

Thread memory and coherence in prompt chains

Another area where differences are seen is in the conversational memory and coherence across multiple roundsIf you chain several instructions together, not all tools maintain the context equally well.

Imagine this sequence in the same chat: “explain the layers of the atmosphere,” then “compare them to the floors of a building,” then “make up 5 exam questions about this,” and finally, “turn it all into a TikTok script for kids.” A model with a good memory should Maintain the metaphor, the scientific content, and adapt it to the new format. without contradicting themselves.

In this type of test, ChatGPT and Claude usually show very solid consistency.While Gemini and Copilot might lose some nuance of the metaphor in the later steps if the conversation drags on or becomes too complex, this doesn't mean they're bad, but your prompt should occasionally remind you of the context: "use the building-atmosphere comparison we created earlier."

When you make prompts of structural transformationFor example, if you want to "convert this text into a table with three columns: problem, cause, solution," all three assistants perform well. The key here is that the prompt clearly specifies the format and columns; if you do, Gemini, ChatGPT, and Copilot usually generate coherent tables that are easy to export.

In tasks of personal and professional productivity, such as “reframe this email to be polite but firm, asking for read receipts”, the character of each tool is also appreciated: Copilot tends to sound very corporate, Gemini quite neutral and ChatGPT somewhat more flexible in tone, especially if you give it previous examples.

Tools, models, and reasoning: why it matters for your prompts

Behind what you see on screen there is another layer: the type of model you choose within each assistant and whether it's optimized for speed or for deep reasoning. This directly influences how the prompt should be written.

In Gemini you can alternate between 2.5 Flash and 2.5 ProFlash is lightning fast and ideal for quick queries, summaries, and simple tasks. Pro, on the other hand, spends more internal time planning the response, which is noticeable in complex analyses, math, or code. If your prompt requires several logical steps ("think step by step"), it's better to use Pro.

In paid ChatGPT you can choose modes within GPT-5 with different levels of “thinking”Reasoning modes do more internal work before responding, which improves quality on complex problems but increases latency. To get the most out of them, it makes sense for the prompt to explicitly ask for breakdowns, evaluation of alternatives, and justification, and, if in doubt, to help you... choose which AI to use.

Copilot lets you move between GPT-4 and GPT-5It also combines the model with the Prometheus layer to cross-reference data from your Microsoft environment. For queries about internal documents, meetings, or OneDrive files, a prompt that precisely references those resources (“use the latest sales report from this channel”) can be much more effective than a generic query without context. There are also Copilot prompts for specific management tasks in Windows environments.

A practical way to decide is to ask yourself: Does my problem need a step-by-step plan or is a straightforward answer enough? If it's the second type (simple definitions, basic concepts, minor corrections), a quick model is usually sufficient. If you need to cross-reference data, compare alternatives, or design processes, then you'll want a more elaborate reasoning model and prompt.

Multimodality and images: how the response varies according to the prompt

Another big difference between assistants is how they manage images and other formatsboth for analyzing and generating them. Here, the prompts also vary considerably from one tool to another.

If you ask ChatGPT, Copilot, or Gemini to describe a photo (“describe this image and explain what’s happening in it”), you’re measuring their ability to vision + language. You can also use MAI-Image for this type of task and to compare results.

In image generation, prompts like “generate a flat design illustration, pastel colors, of a person studying with a laptop, soft background, and minimalist shadows” show how the model interprets style, composition, and consistency. Gemini typically excels in style control and text integration, while Copilot and ChatGPT (depending on the version) have distinct strengths in visual detail and fidelity to instructions. If you use Midjourney, see Midjourney advanced commands.

There are some particularly useful tests, for example: request an image with several very specific elements (“person reading, plant on the right, bookshelf in the background, warm light, soft watercolor style”) and then ask the model if they have met each requirement. This reveals their ability to self-evaluate, which is key if you want consistent prompts for branding or design projects.

Also interesting are the prompts that require consistency of identitysuch as “generate a portrait of a person with these characteristics and then three variations keeping the same face but with changes in pose and lighting.” Many models fail here, forcing you to refine the prompt (“keep the facial features exactly as described above”) or use specific tools.

Typical use cases and what each assistant excels at

Beyond the model, what matters a lot What are you using AI for?ChatGPT, Gemini, and Copilot overlap in many areas, but each has its own strengths and weaknesses, and this influences the type of prompt that performs best.

ChatGPT has become the option more versatile For daily tasks: writing, brainstorming, learning, explaining concepts, basic coding help, etc. Its prompts work very well when you include role, context, objective, and format: "Act as a physics teacher, explain this to me in 5 steps, with simple examples, and then give a mini-quiz."

Gemini shines in multimodality and Google ecosystemIf you work with Google Docs, Sheets, Gmail, or Drive, prompts that leverage these resources (“summarize this Drive document and create a table with the key data”) save a lot of time. They're also very useful for tasks that combine text and images or that require real-time search support.

Copilot It is very focused on Business productivity and codeWithin Word or Excel, prompts like “generate a one-page report with this data, ready to send to the CFO” or “create a comparison table with key KPIs from this sheet” work particularly well. And in GitHub Copilot, the prompts focus more on the code context: clear comments within the file, descriptions of the function you want, and so on.

Meanwhile, attendees such as Perplexity They specialize in real-time knowledge search with quotesClaude prioritizes precision and security in regulated environments, while Grok excels in quick conversations on social media. If you combine several prompts, you can divide tasks: one for researching with references, another for writing beautifully, and another for integrating it into your office suite.

Ultimately, choosing where to place the prompt is almost as important as how you write it. A good habit is... assign each type of problem to the assistant who best solves it. and not trying to have one person do everything, especially in professional settings.

After seeing how they think, what models they use, how they integrate with your tools, and how they respond to different types of prompts, it becomes clear that There is no "best" universal assistantbut rather combinations of tool + model + way of asking that fit better or worse with what you do every day; the key is to experiment with the same prompts in Gemini, Copilot and ChatGPT, note who understands you best in each type of task and keep the one that “speaks your language” to study, work or create, using the rest as support when their specialty suits you better.

Passionate writer about the world of bytes and technology in general. I love sharing my knowledge through writing, and that's what I'll do on this blog, show you all the most interesting things about gadgets, software, hardware, tech trends, and more. My goal is to help you navigate the digital world in a simple and entertaining way.